Video has become the nervous system of modern security programs. Retail, transit, healthcare, campuses, and workplaces all rely on cameras for safety, loss prevention, and incident reconstruction. The same footage that deters theft or resolves a liability claim can also expose intimate moments, sensitive locations, or biometric identifiers if it leaks or is misused. Strong anonymization and redaction are no longer optional add-ons. They are part of baseline data protection in video surveillance, required by law in many jurisdictions and expected by the public.

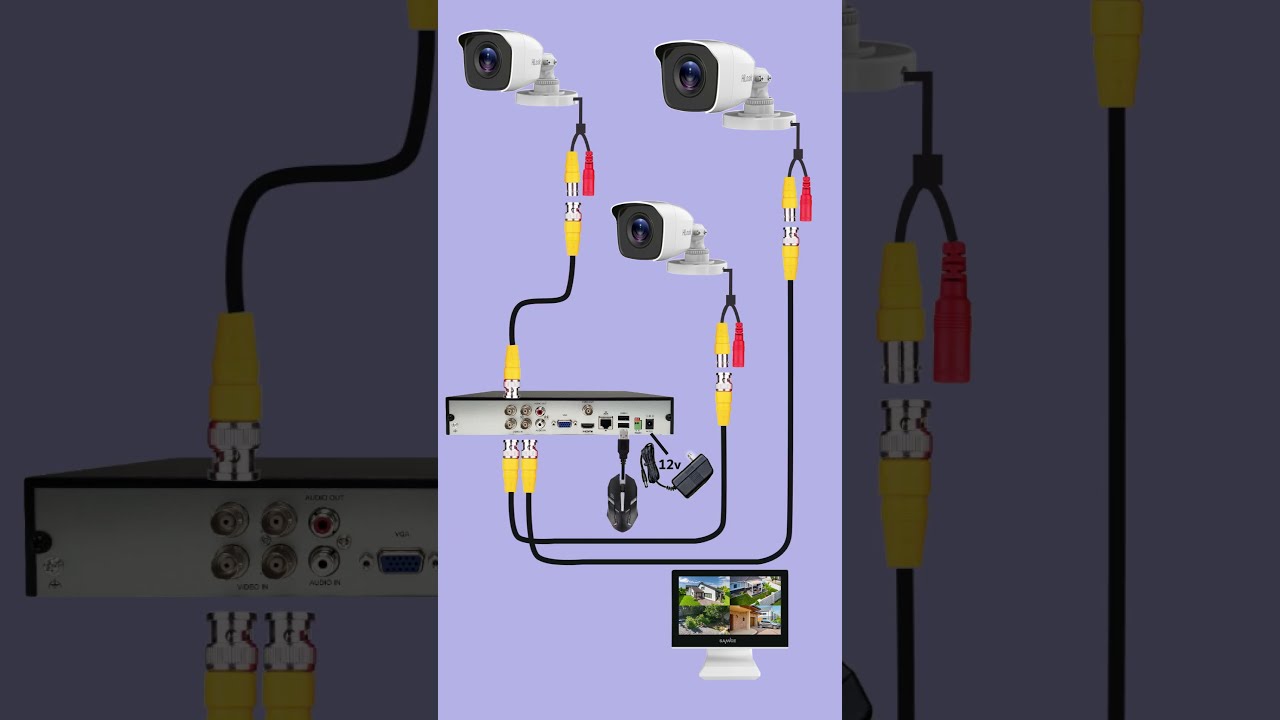

This field is bigger than blur filters. Getting it right means designing the end‑to‑end pipeline for privacy, from camera placement and recording modes to encryption for CCTV systems, key management, access controls, and retention policies. When the video must be shared, then redaction workflows, auditability, and export controls determine whether you protect by design or scramble after the fact.

The practical goal of anonymization

The objective is not to sterilize video until it becomes useless. The goal is to reduce identifiability to a level that matches the use case and the legal standard. Retail investigators may need to see how a suspect moved through a store without exposing the faces of bystanders. Facilities teams might need to verify a door was propped open while masking staff identities. Child welfare investigators need scene-level context without revealing a child’s face.

Two concepts anchor the practice. Anonymization seeks to irreversibly prevent identification, typically by removing or altering identifiers so the data can no longer be linked to a person. Pseudonymization reduces identifiability but preserves linkage through a secret mapping. Most video workflows that support accountability rely on pseudonymization during editing and review, then publish or disclose an anonymized derivative whenever possible. If later accountability still matters, you retain the original only within a secure boundary and under strict access controls.

What the law actually expects

Regulators focus less on specific filters and more on outcomes. Under GDPR and CCTV compliance guidance from EU regulators, identifiable personal data in video must have a lawful basis, be limited to what is necessary, and be protected through appropriate technical and organizational measures. Data subjects have rights to access and erasure, tempered by exceptions for security and legal claims. A DPIA is often required for large‑scale monitoring or monitoring of publicly accessible areas.

In the United States, privacy laws for surveillance in CA and other states concentrate on notice and purpose limitation, with extra sensitivity for audio capture and certain locations like restrooms, locker rooms, and medical areas. California’s laws lean heavily on transparency, reasonable security, and restricted secondary uses. Some municipalities add notification signage requirements or place limits on facial recognition. In workplaces, separate labor laws and union agreements often dictate what can be recorded, how long it can be kept, and how it can be used for discipline. Work with counsel, especially if you monitor shift workers, union members, or areas where people reasonably expect privacy.

Healthcare entities in the U.S. and many other countries face an added layer when video captures protected health information, even indirectly. Footage near nurse stations, registration desks, or patient rooms can inadvertently record diagnoses or medical conversations. HIPAA enforcement trends show that seemingly incidental recordings can still be regulated when they identify a patient.

For most organizations, the practical takeaway is simple. Be explicit about purpose. Limit who can view raw recordings. Apply safeguards that match the sensitivity. Secure footage transit and storage. When sharing, disclose only what is necessary, ideally with anonymization or targeted redaction. Document the decisions.

Where privacy leaks hide in video

Faces get the attention, but real leaks often come from the background. License plates, tattoo patterns, distinctive clothing, computer screens, and paperwork on desks can reveal more than a face. In retail returns, a customer’s credit card slip at the counter may be legible. In warehouses, whiteboards with production schedules or customer names often sit in the camera’s field of view. Sound complicates everything. A casual conversation can disclose a medical condition or a union grievance.

Identity can also leak through motion patterns. Reidentification research shows that gait, height, and habitual routes can be enough to tie a person to a prior clip. Full anonymization is rarely feasible for security operations, but knowing that identifiers extend beyond faces shapes better redaction choices.

Technique selection: pick for purpose, not fashion

Filters work only as well as the problem definition. Different use cases demand different privacy budgets and different failure modes. If your reviewers need to count people and track routes, obscuring faces with opaque masks preserves structure better than heavy blur. If you are disclosing to the public after an incident, you may want all biometrics removed, including faces, tattoos, and voiceprints, at the cost of making the clip less engaging.

Common approaches, roughly in order of invasiveness:

Face and body masking. Solid-color or pixelation masks applied to faces, heads, or full bodies. Good for bystanders, especially when the subject of interest remains unmasked. Avoid thin blurs that can be reversed or that leave eyes readable at high resolution.

License plate redaction. Region-specific detection followed by opaque blocking. Include both front and rear plates, and consider vehicle decals and fleet numbers. When sharing footage of traffic incidents, check the plate reflection in glossy surfaces.

Screen and document masking. Rectangular masks for monitors, handheld devices, or paperwork. The detection is often manual, but semi-automatic tools can track a selected region across frames. This matters in office and healthcare contexts.

Background replacement. Green‑screen style swaps replace identifiable backgrounds with a neutral texture while leaving people intact. Useful when the scene hides sensitive layouts or confidential signage. This is costly for moving cameras and cluttered scenes.

Silhouette or depth-based abstraction. Techniques that simplify a person to a shape with no texture, sometimes leveraging depth sensors. Preserves movement and count while removing facial detail and clothing patterns.

Audio redaction. Beep or mute sensitive segments, or apply voice anonymization to remove speaker identity. Good practice redacts names, medical details, and financial digits. Where feasible, strip audio entirely for public release, unless sound is essential to context.

Temporal redaction. Remove entire time segments that add no value but contain sensitive content, such as a child walking by. This reduces review burden and risk.

Legal-grade anonymization usually stacks methods. A public release, for example, might apply audio removal, face masking for everyone except the subject, and screen/document masking for the background.

How detection drives reliability

Everything hinges on detection. If you do not find the face, you cannot mask it. Modern pipelines combine several detection models to boost recall in real‑world conditions, including low light, motion blur, occlusions, and partial profiles. A few hard truths from fieldwork:

- Face detectors miss more when hats, masks, or side profiles dominate. Adding head and person detectors improves coverage. License plate detectors trained on one region fail in another. Plates vary in aspect ratio, color, font, and presence of stacked characters. Reflections and screens often require manual annotations. Even strong object detectors struggle with glare and angled surfaces. False positives are cheap to fix. False negatives leak privacy. Tune for recall, then add a fast human review pass to prune obvious mistakes.

Tools that track detected objects across frames reduce the manual burden. Once a face is tagged, tracking maintains the mask even when the detector drops briefly during a turn or occlusion. In crowded scenes, multi-object trackers help maintain identities frame to frame, but they can also propagate mistakes. This is where guardrails matter: require reviewers to verify long tracks and spot-check scenes with dense motion or complex lighting.

Practical redaction workflow that teams actually use

Rushing straight into pixelation wastes time. Teams work faster when they triage, stabilize, then redact. A good flow looks something like this:

- Intake and isolation. Pull only the cameras and windows you need for the incident. Copy to a working folder that inherits strict permissions and logging. If the DVR/NVR exports proprietary formats, transcode to a lossless or high-bitrate mezzanine for editing, not a low‑quality MP4 that smears details. Stabilize and sync. Correct for rolling shutter and stabilize shaky footage, especially for body-worn and mobile cameras. Sync multi‑camera clips on a common clock or a visual cue. Clean audio with light denoise if you plan any voice anonymization. Automated detection pass. Run face, person, and license plate detectors with recall‑heavy settings. Track across frames. Mark potential screens and high‑risk regions for manual review. Human verification. Review the hit list at 2x to 4x speed, pausing where detections are dense. Add manual masks for missed faces, identifying tattoos, or papers on desks. Mask selection and quality check. Use solid masks for legal-grade redaction. Pixelation or blur is acceptable for internal use, but treat it as a weaker control. Check masks on motion beats and in transitions, where misses cluster. Export and policy tagging. Export two artifacts: a redacted copy for sharing and a minimal clip for the internal record. Attach metadata: purpose, recipients, retention timeline, and reviewer name. Log every export.

Notice the intentional split between automation and accountability. Machines accelerate the routine. Humans own the edge cases and the final decision.

Data protection starts at the camera, not in the editing suite

Redaction cannot fix a broken storage and access model. Protecting recorded data means hardening the entire chain. Encrypt footage at rest on the recorder and in transit to the VMS. Most modern recorders support TLS for streaming and storage-level encryption with secure key management. When in doubt, prefer systems where keys live in a hardware module or external KMS rather than in plain text on the device.

Secure remote camera access is a common weak point. Disable default credentials. Put cameras and recorders on a segmented network. Use VPN or a zero trust gateway with multi‑factor authentication. Block direct inbound access from the public internet. Tie administrative actions to named accounts, not shared logins. Audit access routinely and alert on unusual patterns, such as midnight logins from unfamiliar IPs.

For video storage best practices, set retention by purpose. Retail loss prevention often needs 30 to 90 days, with longer retention for incidents. Healthcare and critical infrastructure may keep selected incident clips for years under legal hold, while routine footage rolls off quickly. Avoid open‑ended “just in case” retention. It increases breach impact and invites regulatory trouble. Apply write‑once settings or legal holds only when a specific case exists, and review those holds quarterly.

Backups should be encrypted, tested, and minimized. Many breaches stem from forgotten NAS shares or cloud buckets. Keep inventory. Rotate credentials periodically. If you use cloud VMS, understand the provider’s encryption model and who controls keys. If you cannot explain it to an auditor in two clear sentences, you probably do not control it.

Consent, signage, and the ethics of what you do not record

Consent in video monitoring is more than a checkbox. In most public or semi‑public spaces, advance notice through signage and policy satisfies the requirement. The sign should state the operator, purpose, and contact details. In workplaces, add the policy to onboarding, distribute updates, and discuss with labor representatives where applicable. For areas with a high expectation of privacy, do not record unless a compelling legal https://andersongthl114.image-perth.org/choosing-local-alarm-response-systems-in-fremont-features-to-consider basis exists and the design eliminates nonessential capture.

Ethical use of security footage comes down to purpose discipline. If you tell your community the cameras are for safety and incident response, do not turn around and use them to grade employee performance or shame customers on social media. Even where the law might allow a secondary use, your reputational risk grows faster than the benefit. Keep a short list of legitimate uses, review it annually, and train supervisors to say no to requests that fall outside it.

There are also occasions not to record at all. Some counseling rooms or union meeting spaces should be camera‑free. If operations demand a camera for safety, consider privacy zones that black out areas within the frame. Most modern cameras support these masks at the firmware level, which keeps sensitive pixels from ever being captured in the first place.

The nitty‑gritty of face and plate masking

Field performance depends on the details. A few lessons learned from teams that process hundreds of hours per month:

- Use a margin around detected regions. A tight face box misses chins during speech and foreheads during nods. A 10 to 20 percent pad reduces misses. Adjust mask opacity and edge softness carefully. Opaque rectangles leave no ambiguity in public releases. For internal review, a slightly soft edge avoids drawing undue attention to the masked region without introducing halo artifacts. Do not trust blur strength names. “Strong” or “Gaussian 50” means little without resolution context. At 4K, a blur that hides at 1080p may leak identity. Always test masks at the release resolution. For vehicles, include the window reflection in your scan. Plates reflect onto storefront glass and polished floors. A quick manual pass catches these. If you rely on color tracking for masks, watch for scene lighting changes that cause drift. Switch to feature tracking on corners or edges when the background shifts.

These are small choices, but the difference between a mask that slips for two frames and one that holds can determine whether a bystander is exposed.

Audio: the forgotten risk vector

Many organizations capture audio without appreciating its sensitivity. In some states, two‑party consent laws make audio a legal minefield, particularly in workplaces. Even when lawful, audio carries names, account numbers, and medical details that transcribers or viewers might not otherwise learn.

If you keep audio, set default mute on public monitors, and log who listens to original tracks. Build a fast path to remove or anonymize voices. Voice transformation can reduce recognizability, but do not rely on it for adversarial scenarios. For disclosures outside your organization, err on the side of total removal unless the sound is essential to understanding the event.

Balancing utility and privacy by design

The best privacy protection reduces the need for after‑the‑fact redaction. A few design choices pay outsized dividends:

Camera positioning. Frame away from keyboards, customer forms, and whiteboards. Slightly higher angles reduce legibility of documents while maintaining scene awareness. In customer service areas, tilt so that the counter surface is minimized.

Scene zoning. Apply in‑camera privacy masks to permanently block sensitive zones, such as doorways to restrooms or parts of a medical desk. Those pixels are never recorded, which removes a class of risk entirely.

Recording modes. Use event‑triggered recording where practical. Motion detection tuned for people rather than foliage or cars reduces capture. In parking areas, adjust zones so distant public sidewalks are out of the detection field.

Resolution discipline. Record at the minimum resolution that achieves the purpose. Higher resolution captures more identifiers and demands stronger, slower redaction. Save 4K for entrances or incident‑prone zones, not for every corridor.

Access windows. Limit who can view live and recorded streams. Default to derivatives for routine viewing, with raw access reserved for designated privacy champions or investigators. The fewer eyes on unredacted footage, the smaller your exposure.

Governance, logs, and the day the subpoena arrives

Documentation makes or breaks you when your practices are challenged. Keep a simple record: purposes, camera locations, retention times, who can access, and the redaction workflow. For GDPR and CCTV compliance, add your lawful bases, legitimate interest assessments, and the DPIA where required. When footage is shared, note the recipient, the legal basis or consent, and the redaction applied. This log does not need to be fancy. It does need to be consistent.

Subpoenas and data subject requests arrive with deadlines. Plan a triage path. Identify a privacy lead who can evaluate scope, negotiate narrowing when requests are overbroad, and choose appropriate redaction before release. In multi‑party incidents, be ready to provide copies with differentiated redactions, such as one version for internal safety review and one for public disclosure. Do not let an external party dictate your operational security. You can comply while protecting bystanders.

The human element

Even the best tooling falters without trained reviewers. Teach reviewers to scan edges, reflections, and screens. Encourage them to pause on fast motion and transitions. Give them hotkeys for quick mask adjustments, and establish a second set of eyes for sensitive releases. Build pride in getting the details right, because privacy failures rarely come from a single massive mistake. They seep through small cracks.

Cultivate a culture where privacy is part of craft, not a bureaucratic hurdle. When a floor manager asks for a clip to discipline an employee for minor tardiness, a mature team can explain the policy, offer aggregate insights if appropriate, and decline the request without drama. Those small moments define the ethical use of security footage more than any technology choice.

Technology stack: modest tools, strong results

You do not need an exotic stack to improve dramatically. Many teams succeed with a stable editor, a redaction plug‑in or dedicated tool, and a set of tuned detection models. On the infrastructure side, pick a VMS with solid encryption, role‑based access control, and good export options. Integrate with your identity provider so account changes propagate. Turn on audit logging and actually read the reports.

If you operate at scale, consider a queue‑based redaction pipeline where raw clips land in a secure bucket, workers run detection and first‑pass masks, and reviewers approve in a browser. Keep keys and secrets in a managed KMS. Use short‑lived signed URLs for any sharing. These are basic software hygiene patterns, not exotic inventions, and they map cleanly to privacy needs.

Edge cases worth planning for

Body‑worn cameras in dynamic scenes. Expect wild motion, occlusion, and extreme perspective changes. Favor full‑body masks for bystanders, backed by manual spot fixes. Stabilization helps, but it can shift mask alignment, so bake masking after stabilization, not before.

Glass walls and mirrors. Retail fitting rooms often have mirrors outside, and modern offices are glass-heavy. Train reviewers to look for ghost images. Plan masks for reflections explicitly.

Night scenes with IR illumination. IR can wash faces or create hotspots that fool detectors. Tweak thresholds and add manual passes in these segments. Sometimes you switch to silhouette masking for reliability.

Children and protected groups. Apply stricter defaults. For minors, prefer full-body anonymization in public releases, not just face masking. For investigative work, limit distribution to essential staff with time‑bound access.

Multi‑jurisdiction campuses. A hospital with sites in the EU and U.S. or a retailer operating in California and other states needs policy harmonization that meets the strictest baseline across sites. It is simpler to apply strong rules everywhere than to juggle fragmented practices.

What competent execution looks like

A well‑run program reads like this: cameras placed with purpose, signage clear and respectful, recording tuned to reduce excess, encryption everywhere, access tight and logged. When an incident occurs, the team isolates the relevant windows, runs a high‑recall detection pass, verifies masks, and exports two or three fit‑for‑purpose versions. Sharing outside the organization happens only after legal and privacy review. Retention ticks down automatically. Logs tell the story without extra heroics. And when a data subject asks for access under a privacy law, you can provide a tailored, redacted copy without exposing others, along with a plain explanation of what you have and why.

Privacy is not a static checklist. It is a posture that evolves as tools, laws, and expectations shift. Make space for small improvements. Tidy your retention schedules. Test your export path. Tighten your remote access. Update your signage. Train a new reviewer. Each improvement reduces the chance that a bystander’s face or a patient’s medical note ends up in the wrong inbox.

The reward for this discipline is real. Investigations run smoother. Regulators see seriousness. Employees and customers trust you with difficult moments. And your organization can use the power of video responsibly, even under the hardest scrutiny, because you designed for privacy at every step.